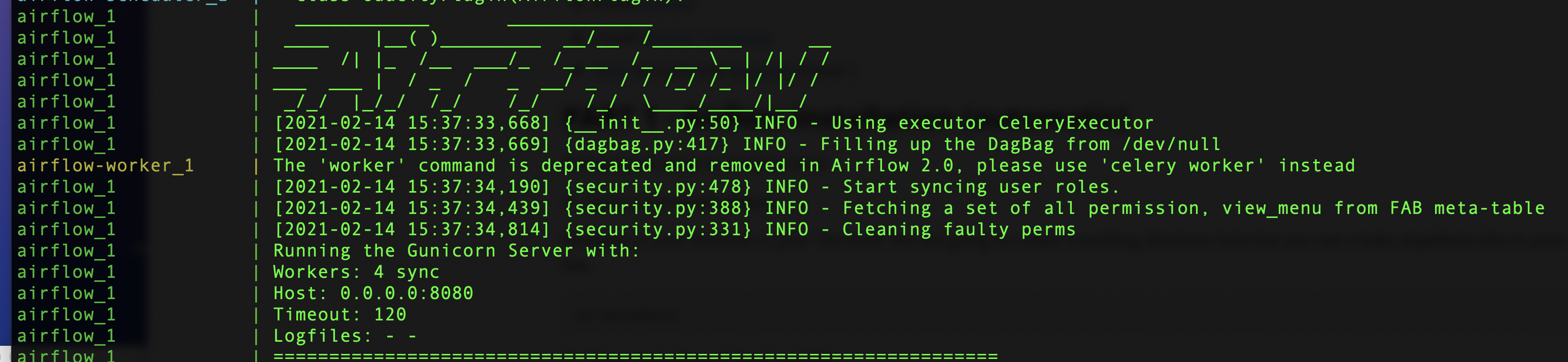

In order to tackle this, I created a database migration tool which would become the first step in my ETL workflow. If you fail to do so the ETL pipeline will start failing. While you are building the ETL tool, you may end up fetching a new field from MongoDB, but at the same time, you have to add that column to the corresponding Redshift table. We discuss the challenges faced and the solutions below: Keeping your ETL code changes in sync with Redshift schema We faced several challenges while getting the above ETL workflow to be near real-time and fault tolerant. Features like task retries on failure handling are a plus. You can define DAG as Python code and it also enables you to handle the state of your DAG run using environment variables. After doing some research I settled for Apache Airflow. Airflow is a Python-based scheduler where you can define DAGs ( Directed Acyclic Graphs), which would run as per the given schedule and run tasks in parallel in each phase of your ETL. One option was to develop our own framework based on threads or developing a distributed task scheduler tool using a message broker tool like Celery combined with RabbitMQ. One of the major challenges was to achieve parallelism while running the ETL tasks. I kept each operation atomic so that multiple instances of each operation can run independently of each other, which will help to achieve parallelism. I used the PyMongo library to interact with MongoDB and the Boto library for interacting with Redshift and S3. I began by writing a Python-based command line tool which supported different phases of ETL, like extracting data from MongoDB, processing extracted data locally, uploading the processed data to S3, loading data from S3 to Redshift, post-processing and cleanup. In this blog, I am going to talk about my learning around building a custom ETL solution which involved moving data from MongoDB to Redshift using Apache Airflow. This is why a majority of ETL solutions are custom built manually, from scratch. Many ETL solutions are available in the market which kind-of solves the issue, but the key part of an ETL process lies in its ability to transform or process raw data before it is pushed to its destination.Įach ETL pipeline comes with a specific business requirement around processing data which is hard to be achieved using off-the-shelf ETL solutions. It included extracting data from MongoDB collections, perform transformations and then loading it into Redshift tables. Recently, I was involved in building an ETL (Extract-Transform-Load) pipeline.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed